Automated email testing catches broken links, rendering errors, and deliverability problems before your subscribers see them. It combines specialized tools with API-driven workflows to verify email content across 50+ email clients, test authentication protocols, and simulate spam filters—without manual checking. Email remains the highest-performing digital marketing channel, delivering an average return of $36-42 per dollar spent, making automated testing critical for protecting that ROI.

Email remains the highest-performing digital marketing channel, delivering an average return of $36-42 per dollar spent.

The thing is, busy marketing teams need testing that runs continuously. Manual testing can't keep pace with daily campaign volumes or catch every inbox rendering variation. Automation solves this.

We'll examine how automated email testing works, compare the leading tools, and show you implementation strategies that save hours while improving deliverability. You'll understand testing workflows that catch problems early and maintain subscriber trust.

What Email Testing Automation Actually Does

Email testing automation verifies your messages work correctly before they reach subscribers. It checks rendering, validates links, tests authentication, and monitors deliverability across different email clients.

Think of it as quality assurance for your inbox presence. The automation runs checks continuously, catching errors humans would miss during manual reviews.

The Core Components of Automated Testing

Automated email testing systems consist of several verification layers working together. Each layer targets specific failure points in the email delivery process.

Content validation comes first. The system checks HTML rendering, image loading, and link functionality. It verifies personalization tokens display correctly and responsive design adapts to different screen sizes.

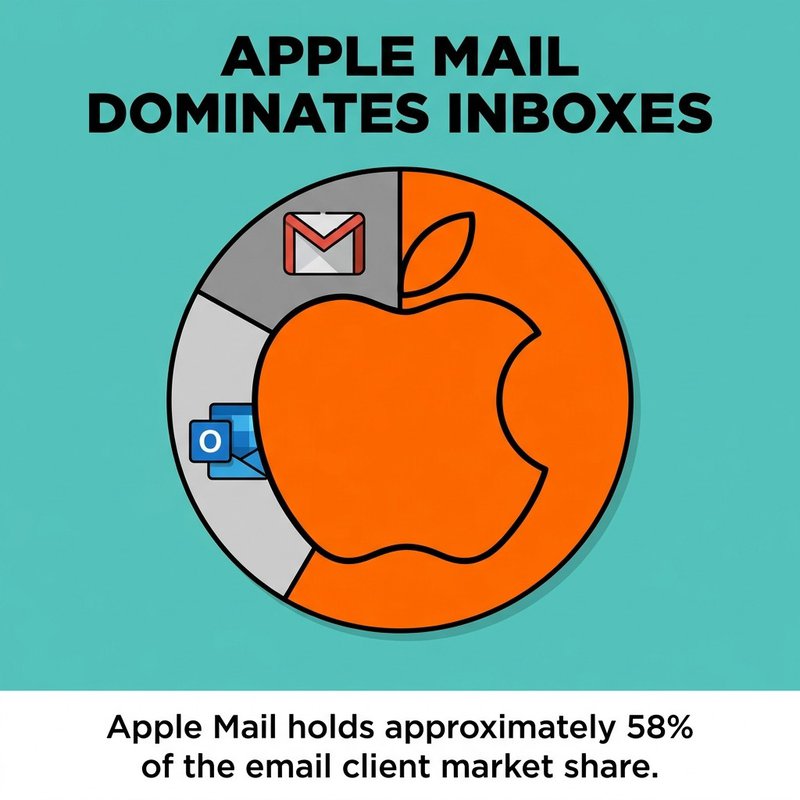

Next comes client compatibility testing. Apple Mail holds approximately 58% of the email client market share, but your emails also need to work in Gmail, Outlook, Yahoo, and dozens of mobile apps. Automated testing previews how your message appears in each environment.

Apple Mail holds approximately 58% of the email client market share.

Deliverability testing follows. This includes SPF, DKIM, and DMARC authentication checks, spam score analysis, and inbox placement monitoring. These tests predict whether your email reaches the inbox or gets filtered.

API integration enables the entire workflow. Testing tools connect to your email service provider, pull campaign data automatically, run verification checks, and report results—all without manual intervention.

How Automation Differs From Manual Email Testing

Manual testing requires someone to send test emails, open them in multiple clients, click every link, and document problems. This process takes 15-30 minutes per campaign and misses edge cases.

Automation runs the same checks in under a minute. It tests more thoroughly because it never gets tired or skips steps.

The coverage difference is substantial. Manual testers typically check 3-5 email clients. Automated systems test 50+ client variations including desktop, mobile, and webmail versions. They catch rendering problems in obscure clients your team would never think to test manually.

Consistency matters too. Humans make different judgment calls about what constitutes a "problem." Automation applies identical standards to every test, making results comparable over time.

Why Email Testing Automation Protects Your Sender Reputation

Your sender reputation determines inbox placement. It's calculated from bounce rates, spam complaints, and engagement metrics. Testing automation helps maintain this reputation by preventing deliverability problems.

Broken emails damage reputation quickly. When subscribers receive messages with missing images, broken layouts, or dead links, they delete without reading or mark as spam. Both actions signal to inbox providers that your content isn't wanted.

The Deliverability Impact Nobody Talks About

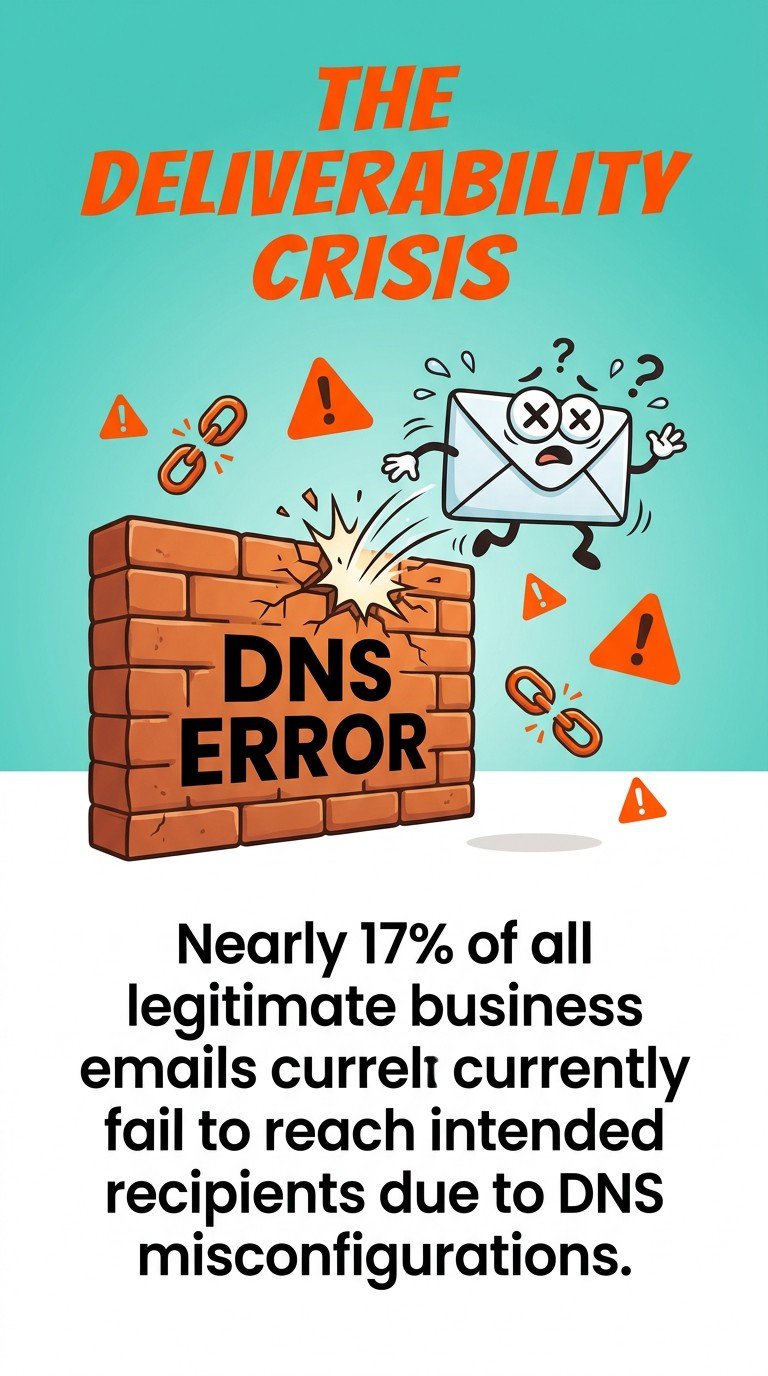

Nearly 17% of all legitimate business emails currently fail to reach intended recipients due to DNS misconfigurations and authentication failures. These aren't spam—they're broken technical setups that automated testing catches before they cause problems.

Nearly 17% of all legitimate business emails currently fail to reach intended recipients due to DNS misconfigurations.

Authentication failures happen when SPF records change or DKIM signatures break. Manual testing won't notice these issues until deliverability tanks. Automated tests verify authentication on every send.

Spam filter simulation shows you what inbox algorithms see. Testing tools analyze your content for spam trigger words, suspicious formatting, and blacklisted domains. They generate scores that predict filtering likelihood.

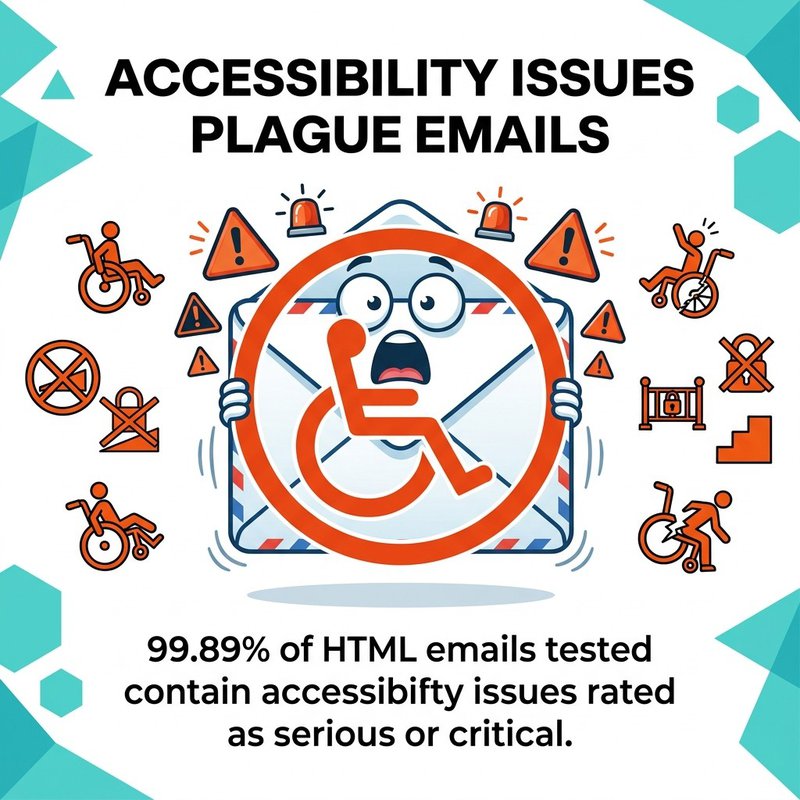

Rendering problems create silent failures. Your email might arrive but display as blank or garbled on certain devices. Subscribers assume you sent broken content and stop opening future messages. 99.89% of HTML emails tested contain accessibility issues rated as serious or critical, many of which also cause rendering failures.

99.89% of HTML emails tested contain accessibility issues rated as serious or critical.

How Automation Scales With Email Volume

Manual testing breaks down as send frequency increases. Teams sending 2-3 campaigns weekly can manually test each one. Teams sending daily transactional emails, triggered sequences, and marketing campaigns cannot.

Automation scales infinitely. Whether you send 100 or 100,000 emails monthly, automated testing runs the same verification checks without additional effort. The per-email cost drops as volume increases.

This matters for transactional email especially. Password resets, order confirmations, and account notifications must work perfectly every time. Welcome emails achieve approximately 50% open rates with 27% click-through rates—higher than any other email type—making their reliability critical for user experience.

Welcome emails achieve approximately 50% open rates with 27% click-through rates, higher than any other email type.

Manual Testing vs Automated Email Testing Trade-offs

Manual testing gives you human judgment. Automated testing gives you speed and coverage. Most teams need both, deployed strategically.

Use manual testing for subjective decisions. Does this copy sound right? Is the design visually appealing? Will subscribers understand this offer? Humans excel at these judgment calls.

When Manual Testing Makes Sense

High-stakes campaigns justify manual review. Annual sale announcements, product launches, and executive communications deserve human eyes before sending. The cost of mistakes outweighs automation savings.

Design validation requires human perception. Automated tools check technical rendering but can't judge aesthetic quality. A designer should review how the email actually looks, not just whether it renders without errors.

New template setup needs manual verification first. Create the template, test it manually across key clients, then set up automated testing for ongoing use. This catches design problems before you automate them.

Segmentation logic testing works better manually. When you're testing complex conditional content that changes based on subscriber attributes, manually verify the logic produces expected results for different segments.

When Automation Should Handle Everything

Transactional emails run on automation exclusively. You can't manually test every password reset or order confirmation. Set up automated testing once, then trust the workflow.

Regular campaigns benefit from automated pre-send checks. Even if a designer reviews the email manually, run automated tests for technical verification. You're checking different things.

Ongoing monitoring requires automation. Deliverability problems don't always appear immediately. Automated systems track inbox placement continuously, alerting you when rates drop unexpectedly.

Multi-client testing becomes impossible manually at scale. Testing 50+ email client variations takes hours manually but minutes with automation. The coverage difference alone justifies automated tools.

| Testing Aspect | Manual Approach | Automated Approach |

|---|---|---|

| Time per campaign | 15-30 minutes | Under 2 minutes |

| Email clients tested | 3-5 major clients | 50+ client variations |

| Authentication verification | Rarely checked | Every send |

| Spam score analysis | Not available | Automated scoring |

| Best for | Design review, copy assessment | Technical verification, deliverability monitoring |

Best Email Testing Automation Tools for Different Needs

The right email testing tool depends on your workflow, technical requirements, and team structure. Some tools excel at visual preview testing while others focus on API-driven automation.

We've found that most teams need different tools for different purposes. Preview testing tools serve designers, while API-focused platforms serve developers building automated workflows.

Visual Preview Testing Platforms

Litmus dominates the email preview market. It renders your email across 100+ email clients and devices, showing exactly how subscribers see your message. The visual side-by-side comparisons make identifying rendering problems straightforward.

Litmus also includes spam testing, link checking, and accessibility analysis. Teams use it primarily for pre-send campaign verification. Upload your HTML, review the previews, fix any rendering issues, then send.

Email on Acid offers similar preview functionality with stronger workflow collaboration features. Multiple team members can review the same test, add comments, and track issue resolution. This works well for agencies managing client campaigns.

Both tools integrate with major email service providers like Mailchimp, HubSpot, and ActiveCampaign. You can test directly from your ESP without exporting HTML files.

API-Driven Testing Solutions

Mailosaur targets developers building automated testing into CI/CD pipelines. It provides disposable email addresses for testing, captures incoming emails, and exposes content through an API for programmatic verification.

Developers use Mailosaur to test email-dependent workflows. Need to verify your password reset emails work? Send to a Mailosaur address, retrieve the email via API, extract the reset link, and confirm it functions correctly—all automated.

Mailtrap offers email sandbox testing for staging environments. It catches all outgoing emails from your development server, preventing test emails from reaching real users. You can inspect content, verify sending logic, and test integrations safely.

Both Mailosaur and Mailtrap include HTML rendering checks, spam analysis, and deliverability testing alongside their API functionality. They bridge the gap between preview tools and full automation.

Deliverability Monitoring Platforms

Validity Everest (formerly Return Path) specializes in ongoing deliverability monitoring. It tracks inbox placement across major providers, monitors blacklist status, and provides sender reputation scoring.

This tool works differently than preview testing. Instead of checking individual campaigns, it monitors your entire sending domain continuously. You receive alerts when deliverability drops or authentication fails.

GlockApps offers seed list testing for inbox placement. Send your campaign to their network of real email accounts, and they report where messages landed—inbox, spam folder, or blocked entirely. Gmail achieves approximately 95% deliverability, making the 5% difference between success and filtering critical to track.

Choosing Your Tool Stack

Most teams combine tools rather than relying on one platform. A typical stack includes a preview tool for designers, an API solution for developers, and deliverability monitoring for ongoing oversight.

Start with preview testing if you're new to email testing automation. Litmus or Email on Acid provide immediate value with minimal setup. You'll catch rendering problems and build testing habits.

Add API testing when you scale transactional email. Once you're sending automated sequences or high volumes of triggered emails, tools like Mailosaur become essential for verifying workflows work correctly.

Layer in deliverability monitoring as sending volume increases. When email becomes a primary revenue channel, continuous monitoring justifies the investment in platforms like Validity Everest.

Key Features That Matter in Email Testing Tools

Not all email testing features provide equal value. Some capabilities look impressive but rarely get used. Others seem basic but prove essential daily.

Focus on features that match your actual workflow. If you don't send to Lotus Notes users, testing Lotus Notes rendering wastes resources. Prioritize what your subscribers actually use.

Client Coverage That Matches Your Audience

Check which email clients your tool tests before buying. Generic "70+ email clients" claims don't help if those clients exclude the ones your audience uses.

Review your own email analytics first. Identify your top 5-10 email clients by open rate. Ensure your testing tool covers all of them, including specific versions if relevant.

Mobile testing deserves special attention. Mobile apps render emails differently than desktop clients. Your tool should test iOS Mail, Gmail mobile app, Outlook mobile, and other popular mobile environments separately from their desktop counterparts.

Dark mode rendering matters now too. Many email clients offer dark mode, which inverts colors and can break designs. Testing tools should show both standard and dark mode rendering.

Speed From Test Submission to Results

Fast testing enables iteration. If results take 10 minutes, you'll test once and hope it's right. If results appear in 60 seconds, you'll test multiple variations and fix problems properly.

Real-time testing works best for campaign optimization. Upload HTML, get instant previews, make changes, test again. This workflow requires sub-minute turnaround times.

Batch testing suits high-volume senders. Queue up multiple emails, run tests overnight, review results in the morning. Speed matters less when you're testing dozens of templates systematically.

API Access for Workflow Integration

API integration enables true automation. Without APIs, you're still manually uploading emails and checking results. APIs let your systems handle testing without human intervention.

CI/CD pipeline integration requires API access. Developers building email functionality need automated tests that run on every code commit. This catches regressions before deployment.

ESP integrations simplify workflows. Direct connections to Mailchimp, Klaviyo, or Brevo let you test campaigns from within your email platform. No HTML export, no file management—just click "test" and review results.

Webhook support enables custom workflows. Configure your testing tool to send results to your project management system, Slack channel, or custom dashboard. Alerts reach the right people automatically.

Actionable Reporting That Guides Fixes

Good testing tools don't just identify problems—they explain how to fix them. Screenshots showing rendering issues help, but guidance on correcting the underlying HTML works better.

Spam testing should explain which elements triggered filters. Knowing you got a spam score of 7.2 helps less than understanding that your subject line contains three spam trigger words and your HTML has suspicious formatting.

Link checking needs detail. "3 broken links found" requires you to hunt for them manually. "Broken links: line 47, line 89, line 124" lets you fix them immediately.

Accessibility reports should prioritize issues. Not all accessibility problems impact users equally. Reports should distinguish between critical issues that block access and minor improvements that enhance experience.

Email Deliverability Testing That Actually Prevents Problems

Deliverability testing predicts inbox placement before you send to real subscribers. It checks authentication, analyzes content for spam signals, and monitors your sender reputation.

Think of deliverability testing as your email's final security check. Content might render perfectly but still land in spam if technical configurations fail.

Authentication Protocol Verification

SPF, DKIM, and DMARC authentication prove you're the legitimate sender. Misconfigured authentication causes inbox providers to reject or filter your email.

SPF records authorize which servers can send email from your domain. Testing tools verify your SPF record exists, covers your actual sending servers, and doesn't exceed DNS lookup limits. Common problems include outdated records that exclude new sending infrastructure.

DKIM signatures create cryptographic proof your email wasn't modified in transit. Testing checks whether signatures validate correctly and match your sending domain. Broken DKIM often results from key rotation problems or DNS propagation delays.

DMARC policies tell inbox providers what to do with emails that fail authentication. Testing verifies your DMARC record is published and configured appropriately. It also checks whether your emails pass alignment requirements.

Authentication failures cause silent deliverability losses. Emails don't bounce—they just never arrive. Automated testing catches these problems before they impact subscribers.

Spam Filter Content Analysis

Spam filters analyze dozens of content signals. Word choice, HTML structure, link patterns, and sender reputation all contribute to filtering decisions.

Content scoring predicts spam filtering likelihood. Testing tools run your email through algorithms similar to actual spam filters, generating scores that indicate risk. Scores above certain thresholds reliably predict filtering.

Trigger word identification shows problematic language. Words like "free," "guaranteed," or "limited time" increase spam scores, especially in subject lines. Context matters—financial services emails naturally contain different language than e-commerce promotions.

Link analysis checks for suspicious patterns. Too many links, links to blacklisted domains, or URL shorteners all raise spam flags. Testing identifies these issues with recommendations for safer alternatives.

HTML quality affects filtering too. Messy code, excessive styling, or suspicious formatting patterns trigger spam filters. Clean, well-structured HTML passes filters more reliably.

Blacklist Monitoring and Reputation Tracking

Your sending IP address and domain build reputation over time. Poor reputation causes filtering regardless of content quality.

Blacklist checking verifies your sending infrastructure isn't listed on spam databases. Hundreds of blacklists exist, but only a few matter for actual filtering. Focus on major lists like Spamhaus, Barracuda, and SURBL.

Reputation scoring aggregates your sending history into a single metric. High reputation means inbox providers trust you. Low reputation triggers increased filtering. Monitor reputation trends rather than absolute scores.

Complaint rate tracking shows how many subscribers mark your email as spam. Rates above 0.1% indicate serious problems. Rates above 0.3% risk blacklisting. Automated testing can't prevent complaints, but monitoring alerts you to problems quickly.

Engagement metrics influence deliverability indirectly. Inbox providers notice when subscribers consistently delete your emails without reading or never click links. Testing tools track these signals as early warnings.

Testing Across Email Clients and Devices Without Losing Your Mind

Email clients render HTML inconsistently. The same code displays differently in Gmail, Outlook, Apple Mail, and dozens of other clients. Testing catches these variations before subscribers see broken designs.

Cross-client testing used to require maintaining devices running every email client variation. Automation eliminates this hardware nightmare.

Why Email Client Rendering Varies So Much

Email clients use different rendering engines. Outlook uses Microsoft Word's engine, which doesn't support modern CSS. Gmail strips certain styles for security. Apple Mail supports advanced CSS but handles it differently than webkit browsers.

These engine differences create unpredictable results. A responsive design that works perfectly in Apple Mail might break completely in Outlook 2016. Background images display in some clients but not others.

Mobile apps add another layer of complexity. The Gmail mobile app doesn't render identically to Gmail in a mobile browser. iOS Mail behaves differently than the Mail app on macOS. Android email clients fragment across manufacturers.

Dark mode multiplies testing requirements. Each client implements dark mode differently. Some invert all colors automatically, breaking carefully designed color schemes. Others respect embedded styles but require specific coding patterns.

Prioritizing Which Clients Actually Matter

You can't test everything. Prioritize clients based on your subscriber data, not generic market statistics.

Check your email analytics for client breakdowns. Most ESPs provide reports showing which clients subscribers use to open your emails. Focus testing on clients representing 80% of your opens.

Mobile-first testing makes sense for most lists. Mobile clients typically account for 60-70% of email opens. Ensure your emails work perfectly on iOS Mail, Gmail mobile, and Outlook mobile before worrying about desktop variations.

Webmail testing comes next. Gmail, Yahoo Mail, and Outlook.com (formerly Hotmail) host millions of email accounts. These webmail clients render emails directly in browsers, creating different challenges than native apps.

Legacy client testing depends on your audience. B2B senders often need Outlook desktop compatibility because corporate environments use it. B2C senders can often skip Outlook testing if their data shows minimal usage.

Automated Testing Workflows for Multi-Client Coverage

Manual multi-client testing doesn't scale. Set up automated workflows that test all priority clients on every campaign.

Integration with your ESP creates the simplest workflow. Connect your testing tool to Mailchimp, Klaviyo, or ActiveCampaign. Before sending, click test. The tool pulls your email, renders it across all configured clients, and displays results.

Scheduled testing suits template monitoring. Set up daily or weekly automated tests of your email templates. Get alerted if rendering breaks in any client, indicating a template corruption or ESP platform change.

Pre-deployment testing works for transactional emails. Configure your development environment to automatically test transactional email templates before deploying code changes. Catch rendering problems during development rather than after deployment.

Side-by-side preview layouts help identify problems quickly. Testing tools display your email rendered across multiple clients simultaneously. Scan visually for layout shifts, missing images, or formatting problems.

API-Based Email Testing for Developers and QA Teams

API-driven testing enables automated verification without manual review. Developers write tests that programmatically check email content, validate workflows, and confirm functionality.

This approach suits teams building email-dependent applications. If your product sends password resets, notifications, or receipts, API testing ensures these critical emails work reliably.

Building Automated Test Suites for Email Workflows

Email testing APIs provide programmatic access to email content. Send test emails to special addresses, retrieve them via API, then verify the content matches expectations.

Mailosaur and Mailtrap both offer this functionality. Create test email addresses, configure your application to send there during testing, then use API calls to retrieve and inspect the emails.

Integration with testing frameworks makes automation practical. Most programming languages have email testing libraries that wrap the API calls in test-friendly interfaces. Write tests that read like "expect password reset email to contain valid reset link."

CI/CD integration catches regressions automatically. Configure your continuous integration pipeline to run email tests on every commit. Failed tests block deployment until developers fix the broken email functionality.

Testing Transactional Email Triggers and Content

Transactional emails must work perfectly every time. Users depend on password resets, order confirmations, and account notifications. Testing verifies these critical paths.

Trigger testing confirms emails send at the right moment. Create a test account, perform the trigger action (like requesting a password reset), then verify the email arrives within acceptable timeframes. APIs let you check email arrival programmatically.

Content validation ensures personalization works correctly. Test emails should contain the right user name, order details, or account information. Extract these values via API and compare against expected test data.

Link functionality testing validates email actions work. Extract password reset links, confirmation buttons, or verification URLs from test emails. Visit those links programmatically and confirm they produce expected results.

Template rendering verification catches design problems. Even transactional emails have designs. API testing can validate HTML structure, check for broken image references, and confirm responsive design works across device sizes.

Integrating Email Verification into Development Workflows

Email testing belongs in your development process, not as an afterthought. Build it into workflows from the start.

Local development testing uses email sandboxes. Tools like Mailtrap catch all outgoing emails from your development machine. You can inspect them without risk of sending test emails to real users—a disaster that's happened to every developer eventually.

Staging environment testing validates pre-production email functionality. Configure staging to use testing APIs instead of production email services. This lets you verify complete workflows safely.

Production monitoring extends testing beyond development. Set up synthetic transactions that trigger email workflows in production, monitoring for failures. This catches problems caused by production-only configurations or third-party service issues.

At mailfloss, we use API-based verification to ensure our own email notifications work correctly. When we send verification results or list cleaning reports, automated tests confirm those emails arrive properly formatted with correct data. It's the same principle—automate what you can, catch problems early.

Implementing End-to-End Email Testing Strategies

Complete email testing covers the entire lifecycle. From template creation through sending and engagement, each stage needs verification.

End-to-end strategies catch problems that single-point testing misses. A template might render perfectly but use a broken unsubscribe link. Automation can test both.

Pre-Send Campaign Validation Checklists

Create standardized pre-send checklists that automation executes. Every campaign runs through the same verification steps before reaching subscribers.

Your checklist should include rendering tests across priority email clients, link validation for every clickable element, spam score analysis with threshold requirements, authentication verification, and personalization testing with sample subscriber data.

Automated checklists prevent human error. Marketing teams get busy and skip steps. Automation runs every check consistently, blocking sends until all criteria pass.

Threshold-based approvals create quality gates. Configure automation to require spam scores below 3.0, zero broken links, and successful rendering in all priority clients. Campaigns that fail these thresholds can't send until fixed.

| Testing Stage | What to Verify | Automation Tool |

|---|---|---|

| Template creation | HTML quality, accessibility, basic rendering | Preview testing platforms |

| Content review | Links, personalization, spam triggers | Content analysis tools |

| Pre-send validation | Authentication, deliverability score, final rendering | Deliverability testing platforms |

| Post-send monitoring | Inbox placement, engagement rates, bounce tracking | Analytics and monitoring tools |

Post-Send Monitoring and Performance Tracking

Testing doesn't end when emails send. Post-send monitoring catches deliverability problems and engagement issues automation can't predict.

Inbox placement tracking shows where your emails actually land. GlockApps seed lists send copies of your email to monitored accounts, reporting whether they reached inbox or spam folders. This reveals filtering problems that pre-send testing missed.

Engagement rate monitoring identifies campaigns that underperform. Unusually low open rates might indicate subject line problems or sender reputation issues. Low click rates suggest content or design failures.

Bounce analysis separates temporary from permanent failures. Hard bounces indicate invalid addresses requiring removal. Soft bounces might resolve on retry or indicate temporary server issues. mailfloss automates this analysis, removing invalid addresses before they damage your sender reputation.

Complaint tracking alerts you to subscriber dissatisfaction. Spam complaints damage reputation faster than any other metric. Monitoring helps you identify problematic campaigns and adjust future sends.

Building Feedback Loops Into Testing Workflows

Use post-send data to improve pre-send testing. Results from sent campaigns should inform future testing criteria.

If certain spam triggers consistently correlate with poor performance, add them to your automated content analysis. If specific email clients show high delete rates, prioritize their rendering testing more heavily.

Create custom test profiles matching your actual subscriber distribution. If 40% of your subscribers use Gmail mobile, weight your testing toward that client. If you send primarily to corporate domains using Outlook, prioritize Outlook testing.

Track testing accuracy over time. When your automated tests predict problems that don't manifest in actual sends, adjust sensitivity. When real problems slip through testing, identify the gap and enhance coverage.

Email Testing Best Practices That Save Time and Prevent Headaches

Effective email testing automation requires deliberate setup. Random testing catches some problems but wastes effort on low-value checks.

We've found that strategic testing—focusing on high-impact areas—produces better results with less effort than trying to test everything.

Start With Template-Level Testing, Not Campaign-Level

Test your email templates once, thoroughly. Then test campaigns using those templates less rigorously.

Template testing should be exhaustive. Check rendering across all email clients, validate all dynamic content blocks, verify responsive design at multiple screen sizes, and confirm accessibility standards. Do this once when creating or updating templates.

Campaign testing can focus on content-specific issues. Verify links go to correct destinations, personalization merges properly, and subject lines don't trigger spam filters. Skip the rendering checks you already performed at template level.

This two-tier approach reduces redundant testing. You're not checking whether the template renders correctly in Outlook every single send—you already know it does.

Template change tracking triggers retesting. When you modify a template, run full testing again. When you use an unchanged template for a new campaign, run abbreviated tests.

Automate the Boring Parts, Review What Matters

Let automation handle mechanical verification. Reserve human review for subjective decisions.

Automation should check link functionality, authentication configuration, spam scores, rendering accuracy, and image loading. These are binary—links either work or don't.

Humans should review copy tone, design aesthetics, offer clarity, and brand consistency. These require judgment automation can't provide.

Hybrid workflows combine both effectively. Run automated tests first. Fix any failures. Then have a designer review the aesthetics and a copywriter check the messaging. Each party focuses on what they do best.

Test on Staging Before Production

Never test email workflows in production environments. Staging environments let you break things safely.

Staging should mirror production configuration. Use the same ESP, similar sending domain authentication, and identical templates. The only difference should be recipient addresses—route everything to test accounts.

Email sandboxes prevent accidental real sends. Configure staging to use Mailtrap or similar services that catch all outgoing email. This prevents the nightmare scenario where test emails reach actual subscribers.

Promotion to production should require testing approval. Automated checks must pass in staging before code or templates deploy to production. This prevents broken changes from affecting real subscribers.

Document Testing Criteria and Share Results

Establish clear testing standards everyone understands. Document what constitutes pass/fail for each test type.

Spam score thresholds need definition. Decide your acceptable maximum score and enforce it. Some teams use 3.0, others 5.0. The specific number matters less than consistent application.

Rendering acceptance criteria should specify supported clients. List exactly which email clients must render correctly. This prevents arguments about whether supporting Lotus Notes is necessary.

Share test results with stakeholders. When automated testing catches problems, notify the marketing team, designers, and developers. Transparency builds trust in the testing process and helps everyone learn from issues.

Success metrics guide process improvement. Track how many problems automated testing catches before sends. Measure deliverability improvements after implementing testing. Use data to justify continued investment.

Making Email Testing Automation Actually Work for Your Team

You now understand what automated email testing does and which tools enable it. Implementation determines whether testing delivers value or becomes shelf-ware.

Start with one workflow. Don't try to automate everything immediately. Pick your highest-volume email type and implement automated testing there first.

For marketing teams, start with your regular newsletter or promotional campaigns. Set up preview testing with Litmus or Email on Acid. Run tests before every send for one month. Track the problems you catch and calculate the time saved.

For development teams, focus on transactional email testing first. Configure Mailosaur or Mailtrap for your most critical email workflow—usually password resets or account confirmations. Write automated tests that verify these emails send correctly.

Expand gradually after proving value. Add deliverability monitoring once testing becomes routine. Integrate API testing after transactional workflows stabilize. Layer in accessibility checks after core functionality works reliably.

Connect testing to tools you already use. If your team lives in Slack, configure webhooks that post test results to relevant channels. If you use project management software, integrate testing alerts there. Meet your team where they work rather than forcing new tools.

The goal isn't perfect testing coverage. The goal is catching the problems that matter before they reach subscribers. AI-powered content blocks drive 18-fold more revenue per recipient than one-time sends, but those sophisticated emails require robust testing to ensure they work correctly. Segmentation-led campaigns generate 760% more revenue than non-segmented broadcasts, making the additional complexity worth managing through automated testing.

Remember that email validation test cases form the foundation of effective testing strategies. Combined with proper API integration and comprehensive automation workflows, you build a testing system that scales with your email program. Your subscribers receive emails that work correctly, your sender reputation stays protected, and your team spends time creating better campaigns instead of manually checking the same things repeatedly.

Testing automation isn't about perfection. It's about systematically preventing the problems that damage deliverability and frustrate subscribers. Start small, prove value, then expand coverage as you see results.